Living on the EdgeEventing for a New Dimension

A practical architecture guide for resilient, secure edge-to-core messaging. Learn how to build systems that thrive in disconnected, distributed environments.

By Bruno Baloi

Executive Summary

Edge computing is no longer "a few IoT devices sending telemetry." It's a full operational domain where the physical world meets software systems. That shift changes the rules: connectivity becomes intermittent, security becomes adversarial, data distribution becomes granular, and observability becomes mandatory.

This guide explains how to build resilient edge-to-core systems using eventing patterns such as: separating edge and core realms, store-and-forward for offline tolerance, flow control (filtering, mapping, traffic shaping, weighted routing), hybrid eventing (pub/sub + streaming), full event mesh topologies (leaf nodes, hubs, superclusters), and end-to-end traceability for real operational awareness.

"The edge expands what's possible—but only if you design for disconnection, exposure, and scale."

Key Takeaways

- The edge introduces four recurring constraints: connectivity, security, distribution, observability.

- Treat edge and core as separate operational realms connected by controlled paths.

- Use store-and-forward to prevent data loss and decouple edge from core availability.

- Use flow control to right-size streams and enforce routing policy without glue code.

- A hybrid event platform supports both real-time signals and durable streams across the edge-core continuum.

Defining Edge

What "Edge" Means in Modern Architecture

The thirst for knowledge and new experiences is encoded into the human condition. We are designed to explore and push boundaries. Throughout history, walking gave way to riding, then sailing, then flying. Now we prepare for space travel—the edge of our known universe.

IT follows a similar arc. A single data center became many. Cloud became the next domain. And now we push boundaries again by connecting the physical world to the digital world. Across the board, connectivity was always the key ingredient: it doesn't matter how far we go, we still want to dial home.

Definition: What is edge computing?

Edge computing is the practice of running compute, messaging, and decision-making closer to where data is generated (devices, sensors, machines, vehicles, gateways)—often outside the predictable environment of a data center. In practice, the modern edge lies at the intersection of Operational Technology (OT) and Information Technology (IT): machines meet software; sensors meet services; vehicles meet analytics.

"The edge is not a place. It's a new operational dimension."

The Four Hard Problems of Edge-to-Core Systems

Connectivity

Edge connections can be intermittent. Devices may roam out of range, lose power, or encounter unstable networks and upstream failures.

Security

Edge systems are often "in the open." They are more vulnerable to tampering, malicious data injection, and unsafe commands crossing into core systems.

Distribution

Edge data must be routed to the right processing destinations and throttled to protect core capacity. Commands must be targeted to the right devices with precision.

Observability

We need a bird's-eye view over devices and the infrastructure connecting edge to core. Awareness is what enables safe operation at scale.

Where Edge-to-Core Eventing Shows Up

Industrial IoT & Predictive Maintenance

MachineMetricsMachineMetrics uses NATS at the edge to collect high-frequency machine data from manufacturing floors, enabling real-time anomaly detection and predictive maintenance.

No-Code AI for Factory Optimization

IntelecyIntelecy streams sensor data from tens of thousands of factory sensors through Synadia Cloud, returning ML-driven insights in under one second round-trip.

Real-Time Energy Trading

EvinyEviny modernized its messaging infrastructure with Synadia Platform, moving critical energy trading data in milliseconds with zero data loss across 39 hydropower plants.

Oil & Gas Edge Data Streaming

RivittRivitt captures machine data from drilling rigs and field devices under harsh conditions, streaming high-volume data in real time with Synadia Cloud -- even with intermittent connectivity.

Edge Energy Management

PowerFlexPowerFlex uses NATS with JetStream to manage EV charging stations, battery storage, and solar arrays at the edge — buffering data locally and syncing with the cloud even through intermittent connectivity.

In practice: If you're building any of the above, you need an eventing layer that tolerates disconnects, enforces strict boundary security, and supports granular routing.

The Case for Separation

Edge and Core as Different Realms

First we built monolithic systems to minimize network usage. Then networks improved and systems decomposed into services and microservices. Now we are in a new phase: processing is moving outward, and connectivity is less predictable.

This requires a different lens: treat edge and core as separate operational realms, even if data flows over a unified path.

"Separation prevents edge volatility from becoming core volatility."

Separation Solves Three Recurring Edge Challenges

Asynchronous Communication + Store-and-Forward

Edge systems should rely on asynchronous event-based communication. HTTP can be heavy and slower and often needs additional infrastructure to scale.

Edge endpoints frequently lose connectivity. To avoid data loss and brittle retry storms, use store-and-forward:

- Collect events locally

- Forward upstream when connected

- Continue collecting while disconnected

- Catch up when connectivity returns

Different Work Belongs in Different Domains

Edge systems often do:

- Filtering and transformation

- Local inference/anomaly detection

- Immediate decision loops

Core systems often do:

- Deep analytics

- Long-lived workflows

- Global coordination and retention

Separation prevents the edge from becoming a fragile extension of the core.

Security Realms + Constrained Boundary Paths

Because edge devices are exposed, keep edge and core security realms distinct:

- Encrypt boundary links

- Scope credentials tightly

- Constrain the subjects/channels that cross

- Avoid accidental access to sensitive control channels

Healthcare Routing and Compliance

Continuous patient monitoring can generate broad telemetry. But elevated risk events may require forwarding detailed history to emergency services, while subsets route to nurses or specialists.

This illustrates a core truth: edge collection may be broad, but core consumption must be filtered and routed precisely to manage compliance and reduce risk.

Flow Control

Filtering, Mapping, Traffic Shaping, Weighted Distribution

Flow control is how you craft event paths intentionally—so consumers receive only what they need, traffic is shaped predictably, and rollout risk is reduced.

A platform bridging edge and core should support four flow control capabilities:

"Flow control is the difference between a useful event stream and a firehose."

3.1Subject Filtering: Solve the Firehose Problem

A unified channel is great for analytics, but terrible for targeted operational processing.

Example taxonomy:

orders.<state>.<city>.<storeid>.<orderid>Chicago store publishes: orders.il.chicago.1234.oid-9876

Filtering allows consumers to subscribe by intent:

- orders.ny.ny.>— all New York orders

- orders.il.chicago.store-123.*— a specific store

Benefits

- Less code (filtering is declarative)

- Lower ops cost (less glue infrastructure)

- More flexibility (change subscriptions without app refactors)

3.2Subject Mapping / Traffic Shaping: Declarative Routing

Instead of writing external router services, define routing in configuration. Mapping reshapes edge-local subjects into core-global subjects and can normalize naming for multi-tenant environments.

Benefits

- No custom routing logic

- Routing rules evolve without redeploying services

- Explicit event paths and improved governance

3.3Weighted Routing: Canary Deployments and Capacity-Aware Distribution

Weighted routing supports canaries and A/B tests:

- 70% to v1, 30% to v2

- Observe failure rates and behavior

- Ramp safely

Also useful for regional capacity differences and load spreading without extra load-balancer tiers.

3.4Push vs Pull Consumption: Managing Backpressure and Bursts

Push delivers messages as they arrive. Pull lets consumers request batches when ready, giving autonomy in fast-producer/slow-consumer scenarios—especially important when backlog catch-up occurs after reconnect.

"Pull consumption lets consumers control ingestion—especially after outages."

Key Takeaways

- Filtering prevents firehoses and reduces consumer complexity.

- Mapping makes routing declarative and operationally manageable.

- Weighted routing enables safer rollouts and capacity-aware distribution.

- Push vs pull is an architecture choice, not a client preference.

Foundation for Resilience

Hybrid Event Platforms and the Full Event Mesh

Edge-to-core architectures need both:

- Event distribution (stateless pub/sub, request-reply)

- Event streaming (stateful, durable, replayable streams)

A hybrid event platform supports both modes natively, reducing platform sprawl.

"Hybrid eventing reduces the number of moving parts—exactly what edge systems need."

Leaf Nodes and Hubs: A Practical Topology Model

Use simple terms:

Leaf Nodes (Spokes)

Edge nodes close to devices

Hubs

Core nodes coordinating global consumers

Define:

- Which subjects are local

- Which are shared leaf↔hub

- What security constraints apply at the boundary

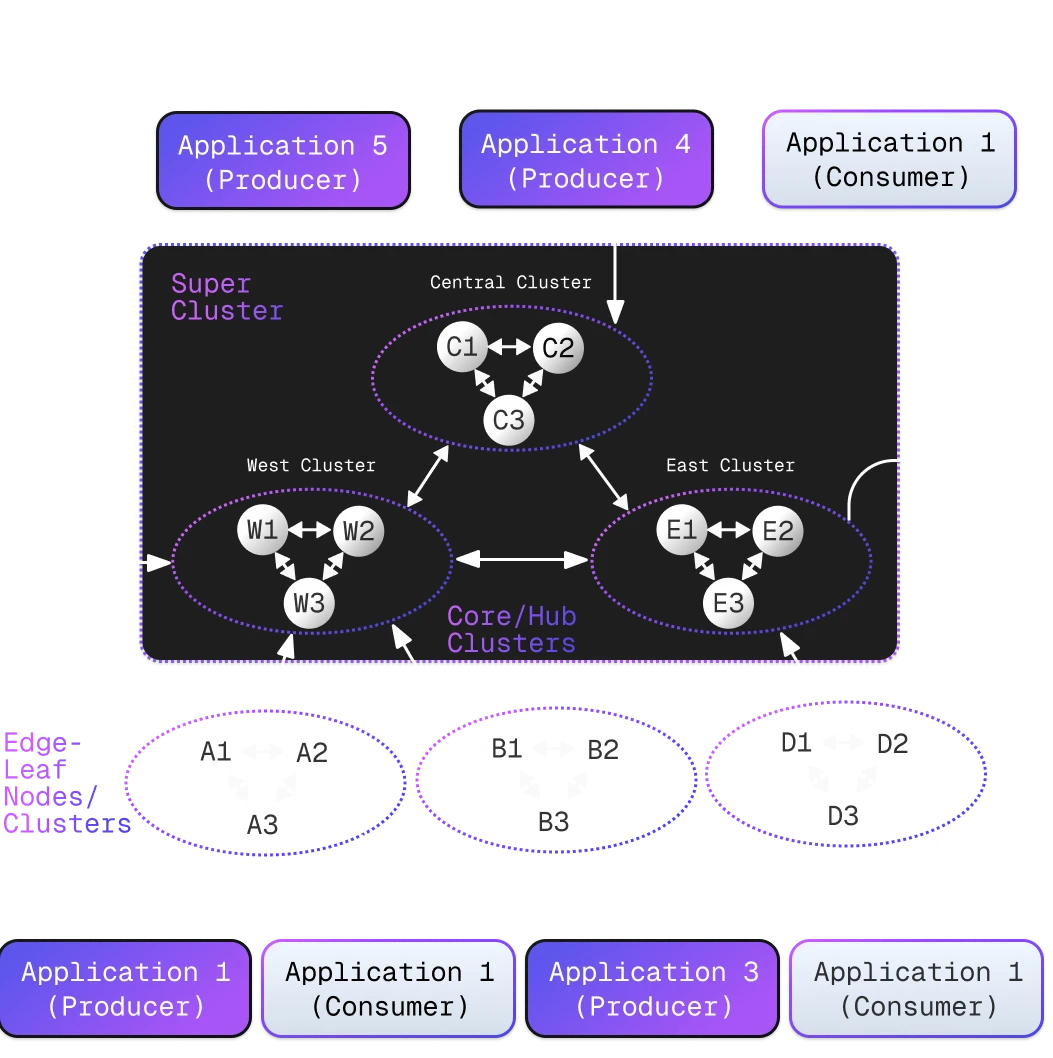

Full Event Mesh: Edge + Core + Multi-Region

Edge and core clusters support HA/FT in both domains. Hubs can also form superclusters across geographies. Leaf clusters connecting to hub clusters across geographies create a Full Event Mesh.

Data Residency and Isolation

Sometimes you must not move data globally. A mesh can isolate traffic via subject taxonomy and constrain cross-region paths, supporting compliance such as GDPR.

Use Case: Global Fleet + Residency

- Edge streams stay in-region by default

- Only permitted aggregates cross regions

- Sensitive streams are controlled and pruned within region

Related content

Most edge computing architectures fail because of the wrong mental model. Learn why the edge is a separate operational dimension—not just far-away infrastructure—and the four constraints that every edge-to-core system must design for.

Learn how to extend your NATS cluster with leaf nodes to build resilient, multi-site topologies that stay connected even when the WAN goes down.

The retry model is built on a hidden assumption that doesn't hold at the edge. Learn why store-and-forward is the pattern that actually works for intermittent connectivity — and what it costs to find out the hard way.

Clustering Models

Full-Mesh vs Active-Passive

Active-passive broker clusters can bottleneck at high throughput. The typical fix is load balancers and extra capacity—more cost and complexity.

Edge systems need a scalable clustering model.

Full-Mesh Clustering: Active Nodes, Linear Scale

In full-mesh clustering:

Full-Mesh Clustering

- All nodes are active

- Clients connect to any node

- Load is balanced across nodes

- Scaling is linear without extra LB tiers

- Failures trigger reallocation without breaking the system

Active-Passive Issues

- Single active node bottleneck

- Requires additional load balancers

- Passive nodes are wasted capacity

- Failover introduces latency spikes

- More infrastructure complexity

For streaming/state, replicas remain consistent via consensus approaches such as RAFT.

"Clustering architecture becomes a cost model when millions of events per second are normal."

Visibility

Observability and End-to-End Traceability

Some event brokers can be embedded. Vehicles may use internal eventing for subsystem coordination and upstream connectivity—effectively acting as edge clusters. Gateways can also form edge layers that "daisy-chain" routing.

Historically, bandwidth limits forced summarization, and upstream decision-making became approximate. Modern hybrid event platforms can trace individual events end-to-end in real time—enabling faster and more accurate decisions.

"Traceability turns approximate decisions into correct decisions."

Use Case: Threat Detection

Detect

Detect anomalies at the edge

Trace

Trace propagation across the mesh

Mitigate

Trigger targeted mitigation

Why Observability Matters at the Edge

- Real-time visibility into device health and connectivity status

- End-to-end event tracing from device to core and back

- Faster root cause analysis when issues occur

- Proactive alerting before problems cascade

Edge Movement Patterns

Common Communication Shapes

Modern edge systems are bidirectional. Understanding common communication patterns helps you design explicit paths rather than discovering them in production.

"Most edge systems repeat the same movement patterns—design for them explicitly."

In-leaf communication

Regional coordination between devices in the same edge cluster

Leaf → leaf via hub

Targeted delivery from one edge region to another through the core

Leaf broadcast via hub

Alerts and threat notifications distributed across the mesh

Hub → individual leaf

Commands and updates sent to specific edge nodes

Hub broadcast to all leaves

Fleet-wide updates and configuration changes

Streaming at the Edge

Consume, Mirror, Merge

Streaming provides durable chronology and replay. In edge systems, it supports:

- Local intelligence and anomaly detection

- Safe forwarding to core

- Controlled catch-up after disconnects

Three Common Edge-to-Core Streaming Patterns

Core consumes edge streams directly

Triage and filter before core distribution. Core systems pull from edge streams, applying filtering logic to determine what data moves inward.

Mirror edge streams into core

Distribute raw edge data. Create exact replicas of edge streams in the core for analytics, backup, or compliance purposes.

Merge many edge streams into one core stream

Global analytics and correlation. Combine telemetry from multiple edge locations into a unified stream for cross-site analysis.

Explore JetStream for durable streaming"Streaming at the edge is the backbone of resilience and intelligence."

Summary

Mitigating Risk, Capturing Reward

Life on the edge can be challenging and risky. But the reward can be enormous when systems respond in real time and decisions are made closer to the source.

A Successful Risk Mitigation Strategy Includes:

The Edge Advantage

When designed correctly, edge-to-core systems deliver faster response times, reduced bandwidth costs, improved reliability during network disruptions, and better compliance with data residency requirements. The key is choosing patterns and platforms that embrace the inherent challenges of distributed computing rather than fighting against them.

About NATS and Synadia

The characteristics described in this guide are embodied by NATS, which supports both lightweight event distribution and durable streaming with JetStream, and is designed for edge-core scenarios. Synadia provides a platform for provisioning, managing, and operating NATS infrastructure -- streamlining operations and enhancing how teams run NATS across environments.

NATS

Open Source Messaging

- Lightweight, high-performance event distribution

- Durable streaming with JetStream

- Purpose-built for edge-core scenarios

- 50+ client libraries, cloud-native design

Synadia

Enterprise Platform

- Provisioning, management, and operations

- Multi-environment and multi-cloud support

- Enterprise support and SLAs

- Secure, scalable control plane

Frequently Asked Questions

What is edge-to-core eventing?

Edge-to-core eventing is an architecture approach where devices, gateways, and edge services publish events that route to core systems for processing—while also supporting bidirectional messaging for control and updates. It emphasizes resilience to disconnects, secure boundaries, and precise routing.

Why is intermittent connectivity such a big deal at the edge?

Because edge devices roam, lose power, and run on unstable networks. Architectures that assume constant connectivity risk data loss, delayed decisions, and cascading failures.

What does "store-and-forward" mean in edge architectures?

Store-and-forward is a pattern where the edge stores events locally during disconnects and forwards them when connectivity returns—allowing safe catch-up without losing fidelity.

Why treat edge and core as separate operational realms?

Separation reduces blast radius. Edge volatility (disconnects, tampering risk, bursty traffic) shouldn’t automatically become core volatility. Separate realms also allow stricter boundary security and clearer routing policy.

What are the four main challenges in edge-to-core systems?

Connectivity, security, distribution, and observability.

What is "flow control" in event-driven architecture?

Flow control is the set of capabilities that shape how events move through the system—filtering, mapping/traffic shaping, weighted routing, and consumption models (push vs pull).

What is a hybrid event platform?

A hybrid event platform supports both stateless event distribution (pub/sub, request-reply) and stateful event streaming (durable, replayable streams). That matters at the edge because you often need real-time signals and durable telemetry.

What is a Full Event Mesh?

A Full Event Mesh connects edge "leaf" nodes/clusters to core "hub" clusters (often multi-region), enabling resilient edge-to-core routing with policy control and residency constraints.

How do edge-to-core systems support data residency (e.g., GDPR)?

By isolating traffic with subject taxonomy, constraining cross-region routing paths, and managing retention/deletion policies in-region.

Where can I learn core messaging concepts (subjects, consumers, patterns)?

Synadia Education provides comprehensive resources for learning NATS messaging patterns, subjects, and consumer configurations at synadia.com/education.